69 min read

Prior Entry | All Media Log Posts | Next Entry

Please be advised these posts aren’t spoiler-free.

Sections

Dear Diary

Power On: The Story of Xbox

Intro

The Renegades

The Valentine’s Day Massacre

The Final Design

The Crunch

The Gamer’s Social Network

The Red Ring of Death

The Motion Revolution

The Disconnect

The Clean-Up

The X

The True Winner

Dear Diary

This is "Dear Diary," a rebrand of the Media Log prologue that better captures what the section really is. But, why a rebrand? Two reasons: one, I think it was a natural evolution; it went from speaking about the thought process behind creative decisions I make to taking some space to put my struggles into what is my most important output currently. While I believe my writing has my personality all over it, this section shows a more human side — the joys and sorrows, the triumphs and defeats. In a world where creative work is rapidly devaluing since AI companies decided their products must have a "creative" use, I have to assert my humanity — I must.

And two, it gives the wrong impression when the first section is entirely self-serving, especially when someone may come specifically for my take on a series. Which is fair — I'm not expecting every reader to be familiar with the format of the Media Log. Titling the section sets expectations accordingly; you can skip it if you don't want to read. It's unfortunate, but I understand if readers don't have the time and/or interest to go through my musings.

I’m just happy readers deem my words to be worth their time, however finite that time may be. If you’ve enjoyed these musings before, thank you — I hope to keep meeting your expectations.

With the announcement and rationale out of the way, I have an anecdote to share, inspired by the open enrollment period for health insurance happening nationwide as I write...

I’ve been seeing a therapist. As of late, I don’t think going to those sessions will produce anything of note.

Ever since the divorce of my parents, I’ve been in and out of therapy. It largely stemmed from my refusal to accept that they were separated, which threw a wrench in their efforts to remake their love lives. I must’ve visited around five different therapists throughout my teens, with one of two situations happening: one, either they’ve found me a hard patient to work with, or two, mom pulled me out of those sessions after some time with no visible progress. I didn’t enjoy the feeling of being treated like cattle — I’m just one among many they see every week, just another one for the insurance to pay (or deny!) for their services. Surely they mean well, and they’re prepared in the nuances of their profession, but feelings seldom care about the facts.

Little did I know that I would be one among many who decided whether their claim was approved or not many years later…

Very briefly, I’m employed by an insurance company handling behavioral health claims. I’ve seen claims like those my current therapist and psychiatrist would submit to get paid. What I’ve seen both fascinates me… and scares me in equal measure. Frankly, when doctors say the US healthcare system is broken, believe them — and this is coming from someone inside the belly of the beast. Despite my belief in a single-payer healthcare system, this job is what’s putting food on my table now.

Prompted by a situation in which I believed I was misdiagnosed, I found that insurance companies have these customer-facing reports on how you use the insurance, including the diagnoses the doctors have reported. I’m aware this data has always been collected, but I’m not sure if it was a document we could always request. It wasn’t until insurance mobile apps started showing up that gave visibility to these, with the apps needing to do more than just contain the digital card for their coverage.

When I saw my report, I saw some creative billing going on. Mind you, it’s not that any of the diagnoses I saw are *wrong.* However, going off who was doing the diagnosing, I wondered, ‘how is this relevant to the condition I saw X doctor about?’ One that made me laugh was a diagnosis by a vascular doctor I saw about a problem in my legs, which I suspected was circulation-related. Not only I saw the circulatory-related diagnoses, but I also saw E66.0, or ‘obesity due to excess calories’ as defined by the International Classification of Diseases (ICD), a medical classification list maintained by the WHO, currently in its tenth revision. But… we didn’t talk about my weight during the consultation? He literally diagnosed me by eye…

Then again, it might very well be what they need to do to get paid — no doctor I’ve seen will disagree that I have an obesity problem. As for how it affects me as a patient, my line of work taught me how certain procedures can only be billed with certain diagnoses. Gross mischaracterization can lead to denied benefits and a not-so-pleasant invoice letter to the patient. To return to the anecdote that first brought me to this thought, when I was seeking an Adderall refill, I saw a diagnosis on the prescription that I don’t think accurately captures the ADHD I have: F90.1, or ADHD hyperactive.

I’d think F90.0 — ADHD inattentive — fits me better, but then I thought about how it may have been better to be coded as hyperactive to avoid pushback at the pharmacy. Given how difficult it is to navigate the US healthcare system, I’ll take whatever advantage I can get. And I *need* that Adderall, even if I’m ‘misdiagnosed.’

Back to my therapist anecdote: right about as I exited college, I just… gave up. The therapist I was seeing at the time, who I believe was also a psychiatrist, offered to put me on antidepressants. I refused. By that point, there was nothing left for us to talk about.

After roughly nine years, I sought therapy again. I was dealing with major emotional distress caused by my job at the time; the best advice the therapist gave me was, “Have you tried looking for another job?” Lady, I’ve told you *repeatedly* how there are no jobs that pay what I earn… A couple of months later, I sought someone else; this man only did telehealth. All I needed to do was turn on my laptop, connect the webcam, and we’re off. It was nice.

Some sessions in, I began to feel… aimless. I’m literally paying a stranger to listen to my troubles, distressing over petty stuff — there was even a time when I foolishly decided to take it ‘easy’ on him by talking about something positive that happened, despite life around me crumbling down. That was the breaking point: I was falling back into the same resistance, the same refusal to engage. I couldn’t do it anymore. I ended up ghosting the guy; I think he got the message.

Two years later, I felt ready to seek therapy again. Or so I thought. When I first saw the new therapist, we went through the initial interview: why am I here, what do I hope to achieve, yadda yadda yadda. By the end, he assigned me homework: read a book called Your Erroneous Zones by Wayne Dyer. I might’ve given the impression that I’m a reader, as I was already blogging at the time, but that has a very important asterisk: I can only get into the reading if it’s something that interests me. Psychology books… weren’t going to do it for me.

Every time I’ve gone in, he asked if I had read the book. Every time I’ve said no, I have not read it. This is where the friction began, with him questioning — rightfully so — what I aimed to get out of the sessions. I was shocked at how the pleasantries seemed to come off, but I didn’t feel offended. If nothing else, I appreciate how forthcoming the question was, considering a society that often dances around the issue to protect someone else’s feelings.

I took the question seriously. After some soul-searching, I convinced myself that I wasn’t going to finish the book, nor was I going to listen to that podcast he suggested. By this point, it must be something subconscious. The only thing that makes sense to my rational side is that I never fully grew out of my rebellious phase. As it pertains to homework, I’m no longer a student; I left that at school many years ago. If I wasn’t going to get guidance on what I initially sought help for, it’s time and money I’m losing.

I had to stop seeing him. Not because I thought he wasn’t a good fit — quite the opposite, it might be the case that what I need a no-bullshit therapist to whip me into place. But my mind was in another place; I thought I was ready to commit to therapy. I wasn’t.

I'll continue wandering aimlessly, I guess... still trying to place a pin on whatever's wrong, still looking for meaning in a journey that might not have a destination…

Power On: The Story of Xbox

‘Power On: The Story of Xbox’ is a six-part docuseries produced by Ten100 released to commemorate Xbox’s 20th anniversary. It tells the story of the Xbox brand, from the DirectX team who championed the idea of a ‘Microsoft console,’ up until the unveiling of the Xbox Series X.

This retelling should be taken as Microsoft’s version of events. The unprecedented access to key information and staff was thanks to Tina Summerford, the current head of programming for Xbox, who pitched the idea of making an Xbox retrospective. Certain events can be independently verified, but others largely depend on primary sources and should be treated with caution, particularly when they support a compelling narrative. I’d encourage my readers to analyze this series with a critical lens: the story you’re being told is how Microsoft would like for you to see what happened, not what actually happened.

First, some back story: how did I get into Xbox? Let’s go back to 2002, the days when friends went to each other’s houses and shot the shit in the bedroom, as the adults claimed the rest of the house. One of my friends was an early adopter of the Xbox. What stood out to me was the fact that Agent Under Fire was among the games he owned. He knew I was a huge Bond fan, so he let me play through some of the missions — an opportunity I couldn't resist. Most games I played were shooters, giving me practice with 'trigger' buttons like the Nintendo 64's Z button. So I felt comfortable with the 'Duke' controller’s triggers.

Everyone I knew had PS2s, but I just wasn’t interested. As I saw my cousin sharing Xbox games with the other kids in the street, I felt left out. I was the uncool kid, what with his old PlayStation and Nintendo 64. My dad got me an Xbox for Christmas that year, bundled with Sega GT 2002 and Jet Set Radio Future. I especially loved JSRF and still listen to its soundtrack to this day. That friend I mentioned before, I got to play Halo thanks to him; I got so into it, I’d use my allowance money to buy the special edition of Halo 2 when the game released. I begged Mom to take me to GameStop — somehow, I managed to get it on launch day.

The Renegades

“Xbox almost didn’t happen.”

This statement is uttered by many who worked on the original Xbox. As it comes from sources with vested interest in Xbox’s legend, it can’t be taken at face value. What we do know is the state of both Microsoft and its soon-to-be competitor, Sony, during the late 90s.

Microsoft, a software-focused company led by Bill Gates, experienced rapid growth as its two main products, Office and Windows, gained widespread acceptance in the early days of computing. This made them arrogant — a trait best displayed during the antitrust hearings — while simultaneously skittish and paranoid. In Gates’s eyes, anything could be a threat to their empire — who could say whether Lotus Software, Apple Computer, or one of the many Linux distros suddenly rose to challenge their dominant position? The mere chance meant they could leave nothing to chance.

Mostly recognized for its consumer electronics, the Japanese giant Sony cemented its dominance in entertainment with the 1995 launch of the PlayStation. Before this, Sony was already everywhere: TVs, handheld cameras, Walkmans, music, movies, and more. In any entertainment technology, Sony had a presence. Such widespread success bred a sense of arrogance similar to that of Microsoft’s. Yet, as they prepared for the PlayStation’s successor, Sony may have overextended itself.

The PlayStation 2, designed with a multimedia focus that reached beyond gaming, prompted Sony to prepare a powerful strategy. There was speculation that the PS2 could integrate seamlessly with other Sony devices, making it a potential PC replacement at home. Recognizing this, Sony marketed the console as the centerpiece of the living room, often displaying setups that included only the PS2 while omitting traditional PCs — even Sony’s own VAIOs. Microsoft saw this positioning as a direct threat to Windows. Before the company could officially respond, four bold Microsoft employees, the so-called ‘renegades,’ set out to challenge this threat.

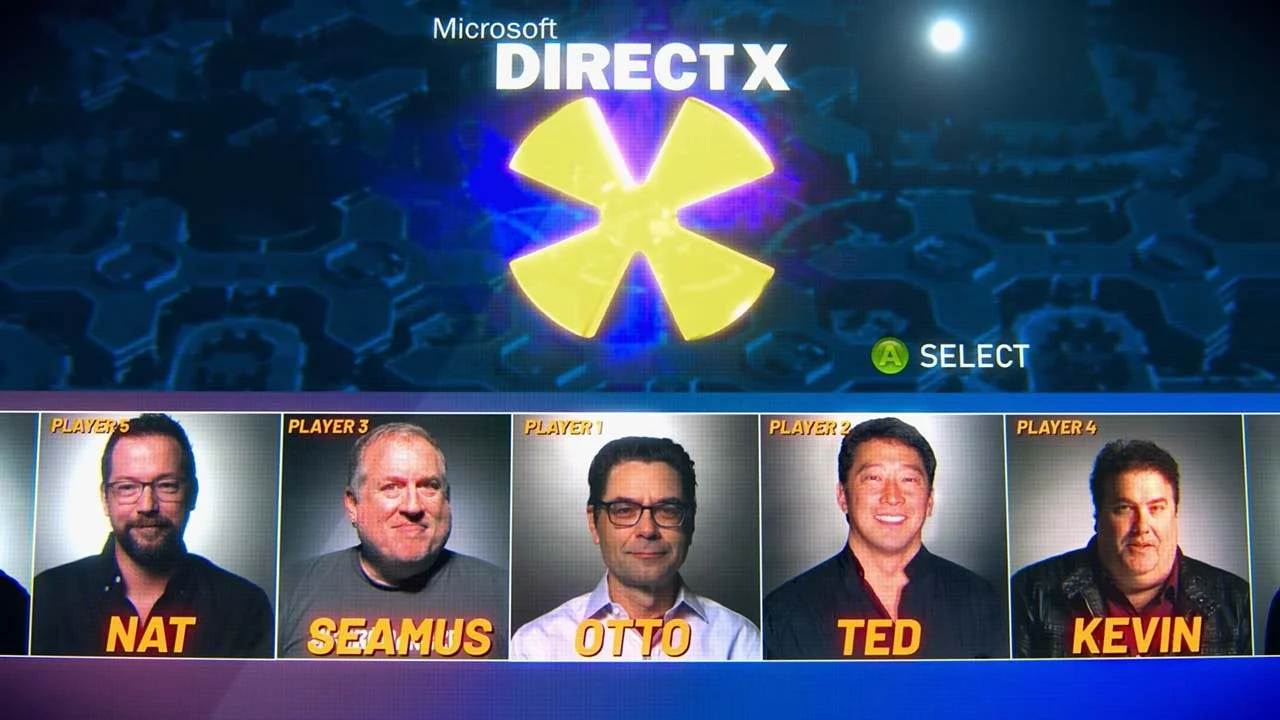

Seamus Blackley, Kevin Bachus, Ted Hase, and Otto Berkes, developers on the DirectX team, made it their mission to persuade a company that has never produced hardware to get into that business, and not just any hardware, but a game console. In hindsight, it’s natural for a team that oversees the toolset that enabled graphics applications on Windows to suggest this kind of hardware. Before DirectX, running graphically demanding games meant shutting down Windows entirely to free up system memory. In fact, a game console represents the next frontier for DirectX. Its codename was ‘DirectX-Box,’ or just ‘Xbox’ for short.

Microsoft wasn’t unfamiliar with games. Windows included games whose sole purpose was to acclimate users to the graphical user interface and published titles like Microsoft Flight Simulator, but the software giant never prioritized games. They were viewed as just another useful tool in Windows’ toolkit — in fact, the ‘Games Group’ was staffed by a small team. This would change when Ed Fries left the Office team for the games division, with many of his colleagues calling the move career suicide.

Under Fries’s leadership, the team’s profile grew as they landed major publishing deals. One notable example was Age of Empires, a real-time strategy game that incorporated elements from Sid Meier’s Civilization and featured technological eras as a gameplay element. The game’s unprecedented success eclipsed the team’s earlier projects, which had been treated more as a hobby. Amusingly, after the launch of Windows 95, Fries recalls the group wearing shirts emblazoned with ‘Microsoft Knows Games.’ Anyone seeing someone wearing that would be tempted to give the nerd a wedgie — even after AoE’s success.

These efforts not only bolstered the Games Group but also DirectX as a development platform. Fries is a potential ally for Xbox — convincing him to join Team Xbox would boost their proposal’s credibility, as the troublemakers’ pitch is repeatedly shot down. Not only that, but Fries was among Gates’s most trusted men. When the four men came to Fries with their pitch, he was more than happy to help move it forward.

When Sony announced the PlayStation 2, its bold vision shook Microsoft executives, including Gates. From a risk assessment of the Emotion Engine to emails circulating through every game-related group within Microsoft, the renegades saw their chance to pitch their project. “Not only do we have a perspective on this, we actually have a presentation, a PowerPoint deck that we’d like to share with everybody.” With Fries backing them, they meant business.

The first meeting with Gates put the Xbox team against the Windows CE team, which had ex-3DO people among its ranks. With differing opinions on how the company should tackle this imminent threat, it was up to them to convince Gates which group should lead the initiative. Team Xbox was a group of naïve idealists, whereas the CE team was more conservative in their approach, having learned hard lessons from the 3DO’s failure. Allegedly.

A quick crash course on the 3DO: it was both a console specification and a licensing model. Imagine Microsoft licensing an exact Surface hardware spec to different manufacturers, while collecting royalties on every hardware unit and every piece of software sold — that was the 3DO model. Since manufacturers received no game royalties, they had to profit from hardware sales alone — the opposite of the razor-and-blades model Nintendo and Sega had perfected. Panasonic launched its 3DO at $699 in 1993 dollars. When adjusted for inflation, the 3DO remains one of the three most expensive consoles ever released, surpassed only by the Neo Geo and the CD-i.

None of the arguments by either camp fully convinced Gates. Fortunately, the Xbox team had a trump card: a working prototype. It was built with slightly aged, off-the-shelf PC parts, and running a heavily modified build of what is assumed to be Windows 98. Pair the removal of bloatware, BIOS modifications, and a week of no sleep for the renegades, and the result was a device whose killer app was booting from cold to operational in four seconds max. Gates could not believe what he was seeing — he asked the prototype to be shut off and booted multiple times again. “Why the fuck doesn’t Windows boot like that all the time?”

Anyone who used Windows 95 or 98 recalls the famously slow boot times. Storage technology’s limited I/O performance didn’t help matters, but the real issue was Windows’s proliferation of start-up components, which contributed to a bloated and sluggish system. Microsoft wouldn't prioritize efficiency for years, only addressing boot times as hardware improved enough to mask the bloat. That’s why the prototype’s achievement was so remarkable: on the same hardware used for Windows 98, it was possible to demonstrate a fully operational environment that loaded in virtually no time at all.

Gates was on board. However, the $500 million initial investment was a tough ask. Steve Ballmer, the de facto CFO, needed to sign off on the project — and he wasn’t a fan. A second meeting was called; Ballmer sat on this one, alongside sales, marketing, and software executives. He goes over the bill of materials for the console, making it painfully evident that the Xbox team didn’t think that far ahead. The economics looked horrible, even more so under a razor-and-blades business model.

Unable to counter the financial argument, the Xbox team fought back in the one way they knew: reiterating what’s at stake. The executives might’ve been content with their empire built atop Office and Windows sales, but not the renegades. They strongly believed that a Microsoft that didn’t bet on this project would be relegated to stagnation in a rapidly evolving market. The Xbox project allowed the software giant to bank on its expertise and developer acceptance of the DirectX toolset to expand its utility past the personal computer. Given Sony’s credible threat, $500M was nothing compared to what they’d lose.

Ballmer pledged his support and, with it, Microsoft’s wallet.

The Valentine's Day Massacre

Now that the Xbox project is greenlit, the fact that Microsoft has never made hardware dawns on Team Xbox. They didn’t have manufacturing partners to lean on, let alone their own plant.

One possible solution was to strike a deal with the OEMs. They got laughed out of the room. A Sega partnership was briefly considered and quickly dismissed. Sega was a sinking ship — Microsoft wanted no part of it. And when the software giant knocked on Nintendo’s door with a buyout offer, they got told off. Ironically, Team Xbox used tactics Team CE would’ve had they won the bid, with similar results. A sense of urgency drove everything: the Xbox *had* to be announced at GDC 2000, only months away, and Team Xbox felt like a fish out of water.

Conversations about the Xbox’s OS began before any hardware talks. The box shown at the Gates meeting ran a build that wouldn’t be viable in the final product — and neither was a full Windows build. The NT kernel, the brain of the eponymous operating system, is an option, but the Windows team wouldn’t give it up. One night, as the division gears up for the release of Windows 2000, the renegades broke into the server room and stole the kernel. Work on it began immediately, reducing an already small footprint even further: from 4MBs to about 200kbs.

On February 14 at 4 PM, Team Xbox convened to review the project's latest updates and request additional funding. Robbie Bach, E&D division president, dubbed this meeting the 'Valentine's Day Massacre' — "all Valentine's Day activities are now off." The executives endured over 4 hours of heated debate, keeping their spouses waiting past dinnertime as they fought to save Xbox.

Gates wasn’t happy with what he read on the pre-meeting deck. He thought he agreed to a games-only Windows box, when the reality was far from it. Feeling swindled by Team Xbox, he lashed out: “This is a fucking insult to everything I've accomplished at this company!” As Ballmer goes over the business case, the P&L assessment is even worse than the one from the first meeting. It was a back-and-forth shouting match until Bach said, “Well then, let’s not do it. We haven’t announced anything.” That didn’t lower the temperature, as the arguing shifted to the sunk cost of the initial investment.

Someone in the room asked, “What about Sony?” Gates weighed which option he disliked less: launching a product that contradicted the principles behind Microsoft's success or letting Sony take over the rest of the household. That framing sealed the deal: Xbox got the additional funding it needed, and the project could move forward. When Xbox veterans say "Xbox almost didn't happen," they refer to this meeting. Even those who weren't in the room knew how high the stakes were — and how close it came to death.

With the Sword of Damocles lifted, the clock was ticking toward GDC. When designer Horace Luke was tapped to create a proof-of-concept for the hardware, he made a statement: a metal X that could not be confused with a PC whatsoever, despite offering familiar PC tools. Fitting the components inside the metal X was complex — imagine Tetris with a motherboard. More importantly, these were working prototypes, not just design mockups.

The team brought three of these prototypes to GDC. All three failed. As the auditorium filled up, Blackley frantically soldered backstage to get them working for the demonstration, which he’d also be responsible for giving. The people in the room know Blackley for Trespasser, a game set within the Jurassic Park universe, which featured the first full physics engine in the medium. But instead of it being lauded for its impressive tech, it was fresh on the attendees’ minds as a commercial failure. Even as the room was skeptical, the demos went without a hitch, including a physics-based one, informed by Blackley’s experience with the tech. More impressively, they were running from the prototypes with failsafes ready in case the stage prototype crashed.

After surviving GDC, the box needed a proper name. It was never meant to be called ‘Xbox’ — it was used while a different name was settled on. Hundreds of thousands of dollars were spent on market research, only to come up with names like ‘Frixion,’ ‘Vybe,’ and ‘Xantra,’ among other terrible choices. Their winner was ‘11X,’ because ‘it goes to 11,’ a Spinal Tap reference. Evidently, this wasn’t working. “Alright, we’ll get on Xbox” — but was it going to be ‘Microsoft Xbox’ or just ‘Xbox’? As journalist Tom Russo noted, the Microsoft name was a liability. “Might as well call it ‘Nerd Box,’ right?”

Sony beat Microsoft to market by six days *before* Xbox was announced at GDC, putting them in catch-up mode. Informally, Xbox aimed for a launch in November 2001 but only confirmed it at E3 that year. Writer N’Gai Croal notes that most companies take up to seven years to develop a console; Microsoft had just 18 months from announcement to launch. Pure madness.

Not only that, there were shakeups within Team Xbox. Rick Thompson, the man Ballmer tasked with oversight of the Xbox project, left Microsoft, leaving Bach to pick up the mantle in what he describes as “the worst 18 months of my professional career.” A man who doesn’t play games, barely understands the gaming business, and often was the adult in the room among a group of ragtag kids. Bach could not be less qualified to lead the Xbox team if he tried.

The Final Design

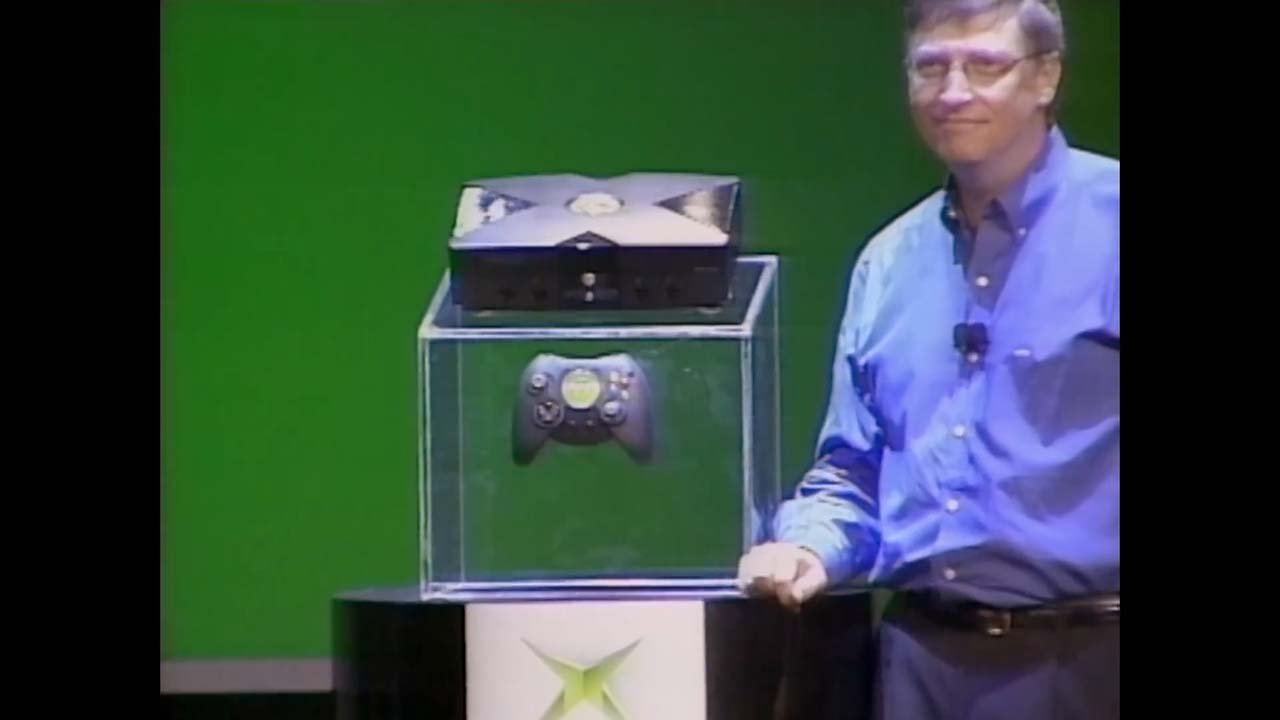

By CES 2001, it had been a year since the Xbox was unveiled. The final hardware design was revealed on stage, with designer Horace Luke stating how it was “quite a miracle to fit everything in that box.” It retains the ‘X’ motif, integrating it into the chassis. Dwayne Johnson was hired to help pitch the console while in character, sharing the stage with Gates and the final Xbox design. With no games to showcase, an entertaining back-and-forth between the two men serves as the perfect distraction.

Microsoft's compressed timeline meant they couldn't have titles built from scratch. They needed either games already in development, ports, basically anything that could hit the November launch window. Among the pitches received, there is one from a studio that wants to revisit a title they previously released on PC. They thought that, with sufficient investment and care, the game could work in 3D. Microsoft disagreed — they didn’t believe the game could successfully make the transition.

That game… was Grand Theft Auto III.

A younger Sam Houser, of Rockstar Games fame, heartily endorsing the Xbox concept in footage from Microsoft's GDC 2000 presentation. Archival footage, upscaled.

It sold 14.5 million copies, defined the early 2000s, and became one of the most lucrative console exclusives in gaming history. Ironically, Sam Houser — Rockstar's co-founder — vouched for Xbox at GDC the year before. Houser believed in Xbox; Xbox didn't believe in him. GTA3 eventually came to Xbox, but by its 2003 release, Sony already seized the cultural zeitgeist and the sales that came with it.

At Microsoft's GameStock event in March, the rushed launch became painfully evident: a slim selection of titles, none of which fully utilized the hardware's capabilities. Instead, the showcase featured a cult classic stolen from Sony, a repurposed Dreamcast game, and three forgettable titles in Amped, Azurik & NFL Fever. The event was largely forgettable, if not for Halo’s live demonstration.

Known for Marathon and Myth, Bungie was the id Software of Mac gaming, but being king of a niche platform didn't pay the bills. The Myth II disaster left them financially vulnerable — as they worked on multiple prototypes to secure immediate funding, their reserves continued to dwindle. One of these prototypes was Halo, which Fries’s employees encountered while scouting for games. Seeing potential, Microsoft threw Bungie a lifeline with one condition: give me Halo. The game that would have theoretically been released on Macintosh became an Xbox exclusive alongside the studio that made it, much to the dismay of their fans. Gross mismanagement is in Bungie’s DNA — but that’s a story for another time.

The final litmus test for Xbox was that year’s E3. It was a disaster.

Microsoft delivered its 8 AM presentation to a hungover media group fresh from Sony’s event the night before — and they weren’t in the mood for disappointment. When Bach attempted to do a live demonstration of the Xbox, the machine failed to turn on. No one thought to test the hardware beforehand — the same team that needed last-minute soldering at GDC hadn't learned their lesson. The incident was embarrassing, but survivable after PR does its damage control. What wasn’t survivable was the killer app, Halo, failing to impress on the show floor.

Unfinished software running on unfinished hardware — whichever the culprit was, the result for the attendees is the same: it’s a bad demo, and they wouldn’t let Microsoft live it down. Peter Moore, Sega of America’s COO, delivered a brutal assessment: “Retailers aren’t gonna stock you, developers aren’t gonna develop for you, and consumers think the product’s not very good and it’s too expensive.” Electronic Arts’ CEO Larry Probst was even blunter: “Microsoft has a lot to do over the next few months. They are on a death march now.”

Probst didn't know how right he was. The six months between E3 and launch became exactly what he described: a death march, and a self-inflicted one at that. Microsoft had never created a consumer electronics device of this complexity and had to navigate a wide range of logistical and regulatory challenges. Bach recalls sending staff to China solely to procure hard drives faster than the existing supply chain could deliver, then having them carry the drives back as hand luggage. The crunch, the sheer velocity at which they had to move, the stress… it was chaos.

However, the documentary frames this as a noble sacrifice. Let there be no doubt: this is corporate propaganda. Team Xbox wasn't a scrappy startup; they were Microsoft employees backed by billions in corporate wealth. The death march wasn't inevitable — Microsoft could have hired more staff, extended the timeline, or scaled back the launch. Instead, they chose to exploit their existing workforce, repackaging the casualties as 'dedication.' That one nervous laugh from a nameless Xbox veteran on their failed relationship? That's not passion. It's someone who learned to normalize abuse, and they’re not the only one.

By November 2001, Microsoft launched the Xbox — but at what cost? Many nameless employees whose stories we'll never know, as well as all of the original renegades. Hase and Berkes left early in development; Blackley and Bachus departed shortly after launch, citing Xbox as their biggest achievement to date. They were just as burned out as the rest, with one key difference: they could afford to leave. Their future ventures failed, but they'll forever be known as the 'fathers of Xbox.'

That looks damn good on a resume.

The Crunch

The spotty quality of Halo’s E3 build, Xbox’s alleged ‘killer app,’ spelled trouble. Six months before launch, drastic decisions had to be taken.

Crunch, a term used since the ‘80s, is the industry's name for mandatory overtime. Typically invoked when a non-negotiable deadline looms, and work has piled up beyond what the team can reasonably manage. They almost always choose crunch. It costs management nothing: software developers don't receive overtime pay, no matter how many hours they work.

During crunch periods, workweeks can range from 60 to 80 hours, with extreme cases running even higher. Staff often sleep on-site and have little contact with the outside world, including friends and family. Developers who prioritize work–life balance or expect paid overtime would be better off steering clear of the games industry. This practice is heavily romanticized by all parties involved: publishers, studio heads, gamers, and journalists.

Fries sequestered all of Bungie’s staff from the end of E3 until those discs had to be pressed. To have any chance of hitting the November 15 launch date, Halo’s scope had to be drastically reduced: levels needed to be cut and reused, inefficient code rewritten, and extensive shortcuts taken. The only way you can achieve all of that in just four months — an objectively worse timeline compared to the Xbox hardware team — is with “full-on, old-school crunch.” What else could a bunch of Midwest and CA transplants even do, refuse?

Crunch culture claimed countless developers whose stories we'll never hear — social media didn't exist back then, and they had no voice. Even today, with platforms to speak out, developers without industry clout remain voiceless. Studios still treat crunch as a rite of passage. HR claims it's optional; managers punish those who refuse.

It’s at this point that I introduce Frank O'Connor, EIC at Imagine Media (Future US) and future Bungie employee. Commenting on Bungie's intense crunch period, O'Connor remarked: "The one positive thing that comes out of crunch is ideation and invention and polish."

Bullshit.

O'Connor's comments are incredibly tone-deaf, made worse by the fact that he wasn't there crunching alongside the Halo team. This is the equivalent of someone who's never worked retail explaining to cashiers how Black Friday is actually fun and brings out everyone's best work. It's management mythology, completely disconnected from the lived experience of the people who suffer through it.

That Bungie's crunch was a footnote, and the Xbox hardware rush got two chapters to itself, speaks volumes — the people who actually make the hardware worth a damn are belittled in the process. This dismissive treatment, only to evangelize their platform, is upsetting. Developers have real power in this give-and-take, but seldom the means to follow through. Money talks, and they don’t have the capital that a hardware manufacturer has. The power dynamics are less a quid pro quo than a parasitic relationship, and until collective bargaining exists in game development, this exploitation will continue unchecked.

The Gamer’s Social Network

Halo’s multiplayer mode did what the single player couldn’t: prove beyond doubt that it wasn’t just a novelty for Xbox, but something with lasting power. You can play with your siblings, relatives, or friends: they only need a spare controller or bring their own to frag each other. Done this way, up to four people could play on a single screen. There was also System Link: a form of LAN (local area network) that permitted up to four Xboxes to ‘talk’ to each other when connected to a network switch. It allowed for up to 16 players, a first for consoles.

Because of this, LAN parties were born. Friends and strangers bonded over pizza and soda, all while fragging each other, with passions running just as high as split-screen matches. Strangers became friends, friends became rivals — the essence of gaming as a social activity. The sheer popularity of these proved Microsoft’s skepticism over the built-in Ethernet port to be unfounded. Now that J Allard has his case study, it was time to move on to phase two of his plan: the ‘global couch.’

Jeff Henshaw, responsible for Xbox and, later, Xbox Live software design when it was being conceived.

It’s worth noting that Allard wasn’t interviewed for the documentary. He appears only in b-roll, while Jeff Henshaw explains how the service came to be. It feels odd to have the person who was tasked with the execution talk about the vision. Allard’s final email before leaving Microsoft didn’t suggest any bad blood, but this choice — having Henshaw, rather than Allard, front the story of Xbox Live — strikes me as a subtle form of revisionism.

Xbox Live is a social gaming platform — the first of its kind — which promised to bring everyone together around multiplayer gaming. To use the LAN party example, what if, instead of 16 people playing in the same place, they were in different parts of the country, or even the globe? That’s the promise of Xbox Live.

For this to work, some aspects had to be standardized. The online identity and friends list weren’t tied to a single game, but to the service itself. Voice chat became the main communication method, leading Microsoft to develop protocols for scalable, low-latency VoIP (Voice over Internet Protocol) — years before Skype would make such technology mainstream.

I want to call this comparison out, as Skype is now dead. For over a decade, Skype was video calling — the verb, the platform, the default. 'Skype me' meant video chat in the same way 'Google it' means search. It dominated until Zoom captured the pandemic boom, Discord won over gamers and communities, and Microsoft's own Teams cannibalized business users. Skype was outlived by the VoIP technology that Xbox Live helped pioneer.

The service was subscription-based: Microsoft sold $50 starter kits with a headset and a one-year subscription. The Ethernet port was already controversial, but charging for something that PC gamers got for free? Allard had to contend with entire departments telling him it was a bad idea, with Drew Angeloff serving as the main dissenting voice in the documentary. The overall sentiment was that Microsoft is committing highway robbery with this subscription.

There was no world in which the starter kit was a value proposition for anyone other than the hardcore gamer, and even that was doubtful. By the end of the 2002 holiday season, the number of Live-enabled titles was in the low double digits, and even fewer were truly worthwhile. Put simply, Microsoft was asking buyers to take a leap of faith on a nascent service: one without games that could showcase its potential, one with voice chat limited to in-game use — unlike PC tools like Ventrilo and TeamSpeak — and one that looked like double-dipping: $50 for broadband, $50 for the service.

Allard’s choice of Ethernet for the Xbox excluded swaths of potential customers. The rural US was left out. People with financial difficulties were left out. US territories were left out. For those of us who drew the short end of the stick, Xbox Live felt like an exclusive cool-kids club we just weren’t invited to. In Puerto Rico, broadband wasn’t available until 2005, three years after the service launched; cable TV company Adelphia bet on the new technology to increase its business, leveraging its existing infrastructure. As dial-up was so common, many people — my mom included — saw little reason to move to a faster service. As a full-time high school student, Xbox Live was financially out of reach. So, while I had an Xbox, I didn’t get into Xbox Live, not even when Halo 2 launched.

And speaking of Halo 2…

The game was on life support. The follow-up to Halo was originally scheduled for release in 2003. It didn’t — the weight of expectation crushed the studio. Halo 2 was a mix of ideas with no real cohesion, akin to throwing things at the wall and seeing what sticks. Or, as Bungie writer Joseph Staten put it: “We were really good at coming up with great ideas. We weren't quite as good at production discipline and figuring out how to right-size all those ideas, fit 'em into a box, timeline, and ship on time.” It’s easy to be honest in hindsight; how gamers didn’t realize this until a decade later is shameful.

Fries saw trouble. Eventually, Bungie studio head Jason Jones requested a one-year delay to rework the game. Fries brought the request to Bach, who called a meeting and held a vote. Fries was overwhelmingly voted down — the original date would stand. He stormed out, threatening to resign. Bach went after him, and the delay was granted. Fries would still leave six months later.

Bungie cost Microsoft one of its life-long employees.

The Red Ring of Death

Peter Moore, already employed by Microsoft when Sega abandoned the hardware business, joined the Xbox group upon Fries’s departure. He’d manage the latter half of the Xbox’s life and oversee its successor.

The Xbox 360 was a gaming console with a multimedia approach, positioning itself as a device that even non-gamers can use. Microsoft didn’t win the battle against Sony, but it reached a stalemate over the living room. With a rumored fall 2005 launch for the PlayStation 3, Moore issued his directive: Xbox *must* beat Sony to market. And they did, releasing their console a full year before the PS3, after Sony ran into trouble with the fledgling Blu-ray format.

Regardless of what Sony thinks, Xbox began the seventh generation of video game consoles; for the first time, they finally had mindshare and momentum.

Until three red lights blinked to life.

According to Xbox’s support documentation, what became known as the ‘red ring of death’ (RROD) indicates a ‘general hardware failure.’ Usually, it’s just the three blinking lights, but sometimes error E74 appears on the display. By the summer of 2006, early 360 adopters had already filed hundreds of reports about hardware failures, yet Xbox figureheads continued to dismiss them as “isolated issues.” The problem, however, became so significant and widespread that it even made national news. It’s one thing for the gaming press to criticise, but when traditional media start echoing those critiques, Microsoft is compelled to respond.

Users reported that their boxes ran hotter than usual before the RROD appeared; silicon heats up during use, and lower power consumption means less heat is emitted. Microsoft’s response, then, was to address the console’s power consumption with the roll-out of four distinct motherboard revisions between 2007 and 2009: Zephyr, Falcon, Jasper, and Tonasket. However, these motherboard revisions did little to mitigate the problem. A ‘general hardware failure’ doesn’t aid in diagnosing the issue, but what was even more perplexing was how this also puzzled Microsoft engineers. They know it’s a heat dissipation issue, but which component was failing? The heatsink, the thermal paste, or something completely unrelated to the cooling system? Ultimately, the engineers’ attempt to pin a root cause to the phenomenon is comparable to a headless chicken running around.

It became evident that the standard warranty policies wouldn’t suffice. The 90-day period was extended to a full year, including repair reimbursements if the customer paid Microsoft for one, followed by free shipping to the manufacturer’s repair centers. Finally, when it seemed like nothing they did was working, the one-year warranty was extended to three years for *all* 360s manufactured since launch, at a staggering cost of $1.15 billion. The initiative, spearheaded by Moore with Ballmer’s blessing, was presented as Xbox acting in its customers’ best interests, but here’s the kicker: even the replacement 360s died on the user. Same use cases, same results. If they worked, then the money would’ve been a worthwhile investment — but it was nothing more than an attempt to save face, not to mention the amount of money being but a drop in the ocean of Microsoft’s wealth. The first three years of the 360 were dark days for all parties involved, especially those who trusted Xbox with their money.

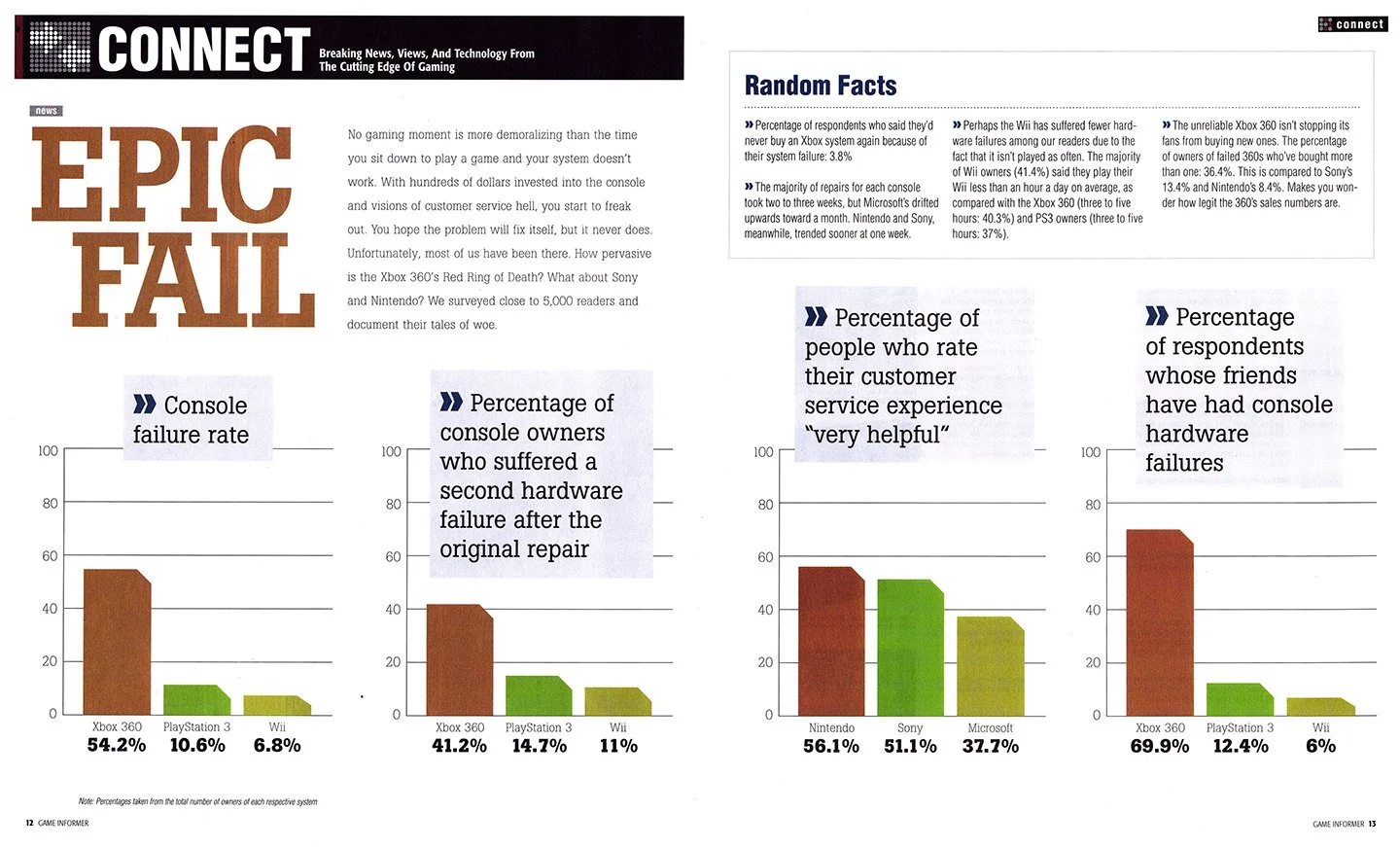

Game Informer issue 197, released September 2009. Pages 12 and 13 collect the reader survey data on console failure rates.

Four years after the carnage, the biggest gaming magazine at the time, Game Informer, surveyed their readers on console failure rates. The results were published on issue 197 for September 2009, and they were damning: 54.2% of Xbox 360 owners had experienced a hardware failure. Compare this to the PS3, which had a failure rate of 10.6%, and the Wii at 6.8%. Worse still, 41.2% of those who'd received repaired 360s experienced a second failure. Even after proving that the ‘fixes’ were just as flawed as the original, 36.4% of these users still purchased replacement 360s. Microsoft had successfully trapped them in its ecosystem.

While all of this was happening, I was in a completely different place, gaming-wise. As I got into games like Kingdom Hearts and Final Fantasy, I felt Sony’s catalog spoke more to my tastes — and it just so happened those experiences were exclusive to the PS2. So in 2005, I traded my Xbox for a PS2. I’d be a Sony customer for the remainder of the decade, getting a used PS3 in 2008 in preparation for Metal Gear Solid’s final entry. Then, a year later, I got a 360 Elite (matte black finish, bigger hard drive) to play the Xbox exclusives. It didn’t RROD initially, but it was banned from Xbox Live after I decided to undergo the JTAG modification to play burned (i.e., pirated) games.

A console built for online play that I couldn't connect with was useless. I cut my losses and got its cheaper brother, the 360 Arcade, after realizing I could use most, if not all, the accessories from the Elite with it. Unlike last time, I wouldn’t dabble in piracy, but I would rub shoulders with the RROD. My 360 Arcade was likely a Jasper model, one we were repeatedly told addresses the underlying cause of the hardware failure… and mine got it anyway. I’m not a careless user either — I didn’t keep the 360 on the floor or in a room with no cooling…

Back then, I was a Sony partisan who took satisfaction in Xbox's failures. Watching this documentary, however, genuinely made me angry — not as a fanboy, but as a consumer. Let’s go over Xbox’s sins with the 360, beginning with its development timeline: August 2004 to November 2005. If Xbox’s two years were harsh enough, the 360’s *fifteen months* screamed ‘hold my beer.’ That we didn’t hear of an exodus of engineers during development is miraculous, and there’s no doubt in my mind how, for some who left, it soured this class of workers on Microsoft’s approach and perhaps engineering as a whole. Previously, they'd relied on off-the-shelf parts; this time, they used custom architecture with an even shorter timeline. They’ve been burned by this before — doubling down and making it even shorter this time cannot be interpreted as anything other than negligence, plain and simple.

The documentary states that the first batch of 360s had a very low yield, with the highest number achieved being 60%. Good production lines achieve yields above 90%; even if you don’t reach a perfect 100%, you should still aim for it. It’s downright absurd that the testing methodology was questioned — when 40% of your units fail QC, the problem isn't the tests, it's the product.

It’s also claimed that engineers “solved technical issues” that allowed them to “chip away at the bone pile” of some 600,000 failed consoles and improve yields before launch. But what issues were solved? What changed in the manufacturing process? How did units that failed testing suddenly become sellable? The documentary never explains this, and here’s why that matters: if Microsoft had already identified and fixed defects serious enough to cause 40% of units to fail in production, why didn’t those fixes prevent the RROD epidemic that followed? The deafening silence suggests these weren't real solutions — they were adjustments that got consoles out the door without ensuring they'd survive in customers' homes.

When Microsoft could no longer ignore the problem, and everything finally came to a head, the least they could do was offer an extended warranty while they worked on a solution. Portraying it to the public as Xbox acting in its customers’ best interests — and even implying customers should be grateful — is disingenuous. It wasn’t until the Xbox 360 S that the underlying problem that caused the RROD was addressed, and I’d be remiss if I didn’t mention how independent researchers figured out what the issue was years before Microsoft came clean about it. Until then, business as usual, nothing to see here.

The GPU connects to the motherboard through tiny solder balls. Repeated heating and cooling cracked the solder, breaking the electrical connection and triggering the Red Ring of Death.

The culprit? Improper lead-free solder that cracked under thermal stress. Not just any lead-free solder — Microsoft had chosen a formulation unsuitable for the 360’s extreme thermal cycling. Whether this was cost-cutting or incompetence doesn’t matter; the result was the same: the fix required a complete hardware redesign. Until then, Microsoft continued selling and repairing consoles with the same defective solder, because properly fixing the issue would have meant admitting that the entire product line was fundamentally flawed.

The cynic in me thinks they only felt empowered to disclose the RROD’s root cause after a class action lawsuit filed against the software giant over the hardware defects was killed by the Supreme Court in 2017. No individual has pockets deep enough to rival the might of Microsoft’s legal team — and we all saw how powerful they are on the antitrust case. The fact remains that they knew.

They knew for *fifteen long years.*

Their engineers knew. Their marketing teams knew. Robbie Bach knew. Peter Moore knew. Steve Ballmer knew. *They all fucking knew.* Moore left Microsoft after securing the three-year warranty mea culpa. Coward.

There’s no room for a charitable interpretation here: Microsoft sold defective consoles at $400 a piece, and they haven’t been held accountable for that — and likely never will. It doesn’t matter that Sony shot themselves in the foot multiple times over the PS3’s lifespan: the Xbox brand should’ve died right there and then. The RROD saga was a brazen violation of consumer trust done in broad daylight; the fact that Microsoft employees got ugly sweaters referencing the RROD in 2024, going all ‘haha, remember when that happened?’

Fuck right off with that!

Deep breath. We should discuss Kinect…

The Motion Revoution

A Kinect sensor, alongside a Xbox 360 S with a controller, on display at the E3 2010 show floor. Credit: James Pfaff, Flickr

A seismic shift occurred when Nintendo released the Wii. Instead of a traditional gaming experience, the console popularized motion controls as a core gaming mechanic. Its primary input was a controller that emulated a TV remote control, colloquially known as the ‘Wiimote.’ For gamers, this came out of left field; however, its resemblance to an everyday item appealed to people who weren’t typically gamers. Parents were playing with their kids. Grandparents were playing with their grandchildren. Suddenly, games were good for you.

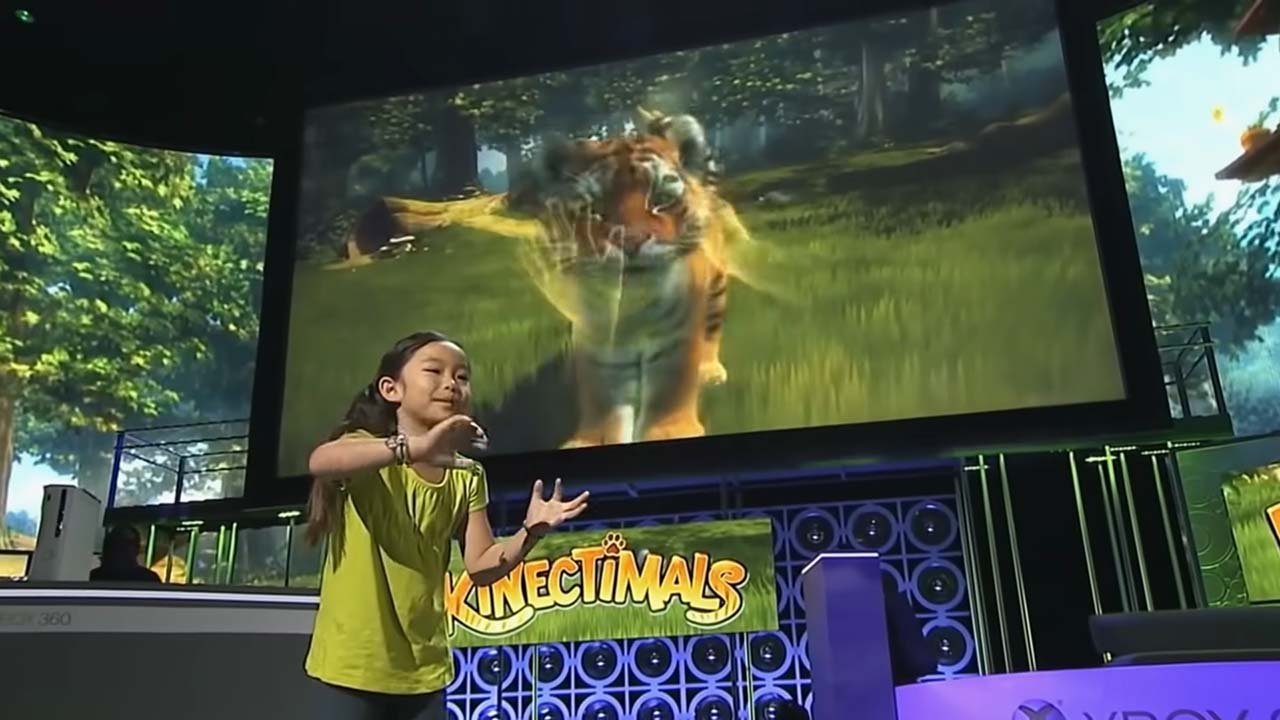

The unlikeliest party brought about the motion revolution, and everyone wanted a piece of the pie. Sony responded by releasing the PlayStation Move, and Microsoft concluded a four-year journey with the Kinect sensor, both in 2010.

The story of Kinect begins in 2006, when a Microsoft employee saw a demo by the Israeli company PrimeSense of depth-sensing cameras. They saw the potential for an application on the Xbox platform, and talks began. The biggest champion was Don Mattrick, Moore’s successor; he even went so far as to say that the traditional controller is an obstacle to accessibility. Whether he sought a pet project to call his own or, unlike his prior employers, worked at a company that made hardware, the motivation is hardly relevant given the events at the time. That Mattrick already had an interest in the technology is a happy accident — Microsoft didn’t know how popular motion gaming would become. Then again, given its uninspired efforts to compete against the iPod and iPhone years later, Microsoft often was the one left behind.

Eventually, Microsoft’s motion-tracking software and PrimeSense’s depth-sensing hardware converged into a viable product. At its 2009 E3 conference, Microsoft announced Project Natal, the codename for Kinect, with a simple pitch: what if your *entire body* was the controller? Onstage, the company presented Natal-enabled game demos alongside technical showcases of the device’s capabilities. Among them was Peter Molyneux’s Project Milo, a Natal-adapted experiment in “emotional AI” that invited players to interact with the eponymous boy.

Just like how Xbox Live was effectively a second launch for the Xbox, Kinect was that for the 360. Select titles were updated to add Kinect support, all while adding family-friendly titles to the already vast 360 library. Despite navigating uncharted waters as an input device, it managed to sell eight million units in two months. This reeks of funky math — I suspect the 360 Kinect bundles were counted among this number, likely bought by people whose console had died on them. After all, that’s how I came to own a Kinect; after my 360 Arcade gave in to the RROD, the 360 S with Kinect bundled was already out. I needed a new 360, and I must’ve certainly thought how the Kinect wasn’t how I liked to play games, but *what if?*

I was part of the market that Microsoft tried to enamor with Kinect: the core gaming audience. It was a fun little novelty, but it was ultimately doomed to be stashed in the closet next to the Wii. I’m sure there were newcomers, but those would’ve been few and far between, as mobile gaming rose astronomically alongside the popularity of Apple’s App Store and Google’s Android Market. Games such as Angry Birds, Bejeweled, and Tetris were perfect for quick sessions, proving an apt distraction for idle thumbs.

Even as the market didn’t favor Kinect, it found a second life in uses outside gaming. As the first consumer-priced depth-sensing camera, the peripheral was enthusiastically embraced by the hacking community for its limitless potential. The medical field, in particular, used it to aid the rehabilitation of stroke patients or as treatment for autistic children — these, alongside other unorthodox applications, came to be known as the ‘Kinect Effect.’ Rather than ending these experiments, Microsoft supported these by granting access to the peripheral’s software with their blessing. Statements about how gaming-first tech paved the way for innovations outside this field, while eyebrow-raising, are correct when referring to the Kinect.

Did I use it often? Not really. I'm not the type of gamer who relishes playing with others, nor am I into party games, which Kinect had in droves — it became a mere microphone to bark voice commands to. I also found it cumbersome for menu navigation: the Kinect dashboard, a mere reskin of 2008's New Xbox Experience, did away with 3D perspective tiles in favor of a flat 2D design better suited for motion controls. The presence of a ‘Kinect Hub’ in this dashboard makes painfully evident that this new dashboard wasn't built with the peripheral in mind, despite bearing its name. The mismatch would be addressed in 2011's Metro dashboard, unifying the interface for both controller and Kinect navigation as part of Microsoft's broader Metro push; Zune HD used it, Windows Phone used it, and Windows 8 used it.

Kinect is a genuinely impressive peripheral — it could’ve been remembered fondly as this interesting device that enabled truly outstanding stuff. However, that’s not what Kinect is known for today. Its villification would come far too soon, and by no fault of its own.

The Disconnect

With its highs and lows, the Xbox 360 cemented Microsoft as a formidable foil to Sony. Talks of Xbox succeeding because they got lucky were shut down, and the anticipation was palpable for the 360’s successor, despite the RROD crisis. The Xbox One would be Mattrick’s second — and most important — product launch, acting upon the lessons learned throughout the 360’s life, all while continuing to build relationships with both publishers and developers.

Closing in on ten years since the introduction of the 360, media consumption has dramatically changed: the staying power of online multiplayer as a social activity, the meteoric rise of streaming services, and the ubiquity of smartphones. With Kinect’s less-than-stellar adoption rate, Mattrick’s Xbox went all in on multimedia, based on internal data which showed the 360 being used more for multimedia applications than gaming. If the data pointed towards that, then the natural last frontier to conquer was television.

While largely irrelevant today, cable TV dominated the early 2010s, even as streaming platforms like YouTube began encroaching on its territory. Picture a typical living room: a TV with a 360 and a cable box, both connected. Want to watch TV? Switch inputs away from Xbox. Want to play games? Switch back. By 2013, about half of cable households had DVRs built into their set-top boxes, making it easy to record shows with a button press. Smart TVs existed only for early adopters with money to burn — most people had just upgraded from CRTs to LCD TVs when analog broadcasting ended. What if there was hardware that could make your existing cable box truly ‘smart,' all while eliminating the input switching hassle? This was the spearhead of Xbox One's multimedia proposal.

The gaming story of Xbox One, outside Microsoft’s campus, looked far less flattering. There were strong rumors that the console was ‘always online’ and would severely restrict used games.

At the time, publishers and console makers were increasingly frustrated with GameStop’s parasitic business model. The once-dominant gaming retailer — destined to become a meme stock in the next decade — bought games in bulk and sold them in its stores. When customers traded those games in, the titles went right back on the shelves as ‘used,’ with all the revenue flowing to GameStop. Sales associates were heavily incentivized to push used games, and performance metrics centered on how many used products a given associate or store could move.

An attempted counter to this was ‘online passes,’ championed by Electronic Arts, which gated the online multiplayer behind a one-time fee. However, if you bought the game new, there was a voucher code inside that gave access. There was pushback — it’s the one occasion in which you could meritoriously levy the ‘greedy’ accusation towards publishers. Fortunately, EA’s practice didn’t catch on — they dropped it after three years, even — but the genie was out of the bottle: a war on physical media was being waged.

The 2009 introduction of Games on Demand, the branding for digital downloads of full retail games — the kind you’d see on a GameStop shelf — came too late to make an appreciable difference. The convenience factor hardly mattered: price-conscious customers noticed how digital titles didn’t receive the deep discounts physical media did, whereas customers with a spotty broadband connection or whose data was capped found the sizable downloads impractical. The Xbox Live Marketplace would continue to be known for its smaller-scale Arcade titles, and as a viable option for self-publishing for budding developers with no publisher backing. For retail titles, though, physical games and sharing with friends remain an important aspect.

Then came May 21, 2013. A day that’ll live in infamy for Xbox.

The bizarre two-part unveiling strategy for the Xbox One began by showcasing the final hardware and walking through its multimedia features; the games would be revealed at E3 the following month. When Xbox announced the event, they didn’t hint at this strategy; instead, they invited gaming influencers to campus and gamers worldwide to watch the presentation. It became a painful, hour-long slog with no games, but plenty of buzzwords: cloud, smart devices, and of course, TV.

Gamers are a cynical crowd — and now, they have social media. That the eighth generation’s first unveiling was a flop of this magnitude meant that a treasure trove of memes went live on Twitter instantly. The key photograph of Xbox One was repurposed as an image macro, with derivatives depicting the many ways this reveal failed the core gaming audience. Microsoft had a severe PR problem on its hands, and Mattrick made it worse with his non-answers to the then-always online rumor. Body language in public speaking is important, and Mattrick’s disregard for the question confirms what former Microsoft employee Adam Orth said about “an ‘always on’ console” months before the reveal.

After Mattrick’s comments, Xbox PR was deployed to try and clarify them, doing their best before E3. But Mattrick wasn’t done, oh no. Seemingly unsatisfied with the fire he had lit during the first event, one Microsoft was actively and unsuccessfully trying to put out, he threw napalm at it in an aside with hype man Geoff Keighley after the E3 presentation:

Note how Keighley gave Mattrick an out, and he didn’t take it.

The reaction to these comments, much like the launch event, was swift — it turned many Xbox loyalists into PlayStation lifers. Mattrick might as well drop by Sony HQ to collect his paycheck, perhaps an ‘employee of the month’ plaque. Many 180s happened after E3: the confusing game-sharing model Xbox One put forth was scrapped, the ‘always online’ requirement was dropped, and so was the plugged-in Kinect requirement. This last was a half-measure, as the box still included the peripheral — a stark reminder that it *used* to be mandatory to have it plugged in.

True, the original Kinect’s challenge was courting game developer interest without a sizable install base, but making the successor ‘a core part of the experience,’ marketing speak for mandatory, wasn’t the way to go. Kinect didn’t linger in irrelevance; it was burned at the stake as the crowd read the indictment: Kinect added $100 to the price tag. Kinect took valuable resources away from developers. Kinect is worthless to gamers. With the vilification of Kinect complete, Xbox’s next head, Phil Spencer, was forced to cut his losses and kill Kinect, all to appease a crowd that no longer trusts Microsoft.

I'm appalled that Mattrick appears in this documentary at all. His first public statement on Xbox One — a full decade after abandoning it — comes in a documentary bankrolled by his former employer. He offers no apology, no accountability, no recognition of the damage he caused. Watch his interview: there's no remorse.

Because Mattrick didn't just leave Microsoft after the Xbox One struggled. He left before the console even launched. Two months after the catastrophic May reveal, with the backlash still raging and Microsoft frantically reversing DRM policies Mattrick championed, he took a job at Zynga and disappeared. He set the Xbox One on fire, then walked away before it hit shelves. He never faced a single customer who bought the console he designed. He never answered for the $100 price gap or the forced Kinect, or the always-online arrogance. He just left Spencer and the Xbox team to spend the next decade cleaning up his mess.

Moore at least faced the RROD crisis. He didn't run the moment things got bad — he stood in front of cameras, announced the warranty extension, and took the criticism. Only after publicly acknowledging the disaster did he leave. Mattrick couldn't even manage that. He caused the disaster, saw the backlash, and was gone before the console hit shelves.

And in this documentary, he still believes he put Xbox "on the right path." If the right path means an identity crisis so severe that Xbox increasingly resembles Sega's slow march toward irrelevance, then sure — he succeeded. Xbox One launched 5 million units behind PS4 in its first year and never caught up. Microsoft has since spent the entire generation in damage control for the decisions Mattrick made in 2013 and refused to face consequences for.

If Xbox dies, I want Donald Allan Mattrick's name and cowardice to be thoroughly documented in every single retrospective and singled out as the man who killed the Xbox brand.

“I was a huge Xbox fanboy in those days. I believed this was a momentary blip on their momentum, still standing by my choice to back Xbox — I was that burned out with Sony’s handling of the 2011 PlayStation Network hack. As such, I neglected all the obvious signs: the clunky interface, the Kinect requirement, and the lack of games. Even Call of Duty, with which Microsoft had a timed exclusive agreement with Activision, took its business to Sony. All of that didn’t matter: in my head, Xbox once again was the underdog it was when the original hardware debuted. ”

Having recently graduated from college around the time of the Xbox One’s release, I was underemployed, broke, and facing the start of my student loan repayment. Most of my paycheck went toward cellphone service and internet; a $500 console simply wasn’t realistic for my budget.

In the fall of 2014, GameStop announced a partnership with Comenity Bank to launch its store card. Marketed as a way to make the hobby more affordable, at an interest rate starting at 29.95% APR. On one of my visits, a store employee pitched the card just like they pushed trade-ins, used games, or a Game Informer subscription. I already had a few store cards, but I thought, eh, why not — I’d give it a shot.

I was approved for a thousand-dollar credit limit. Suddenly, the Xbox One became affordable. I couldn’t buy it then, but I’d definitely drop by later to grab one.

A Kinect-less SKU for $399 existed as early as the summer of 2014, but with all of this existing inventory to sell, one way to get rid of it is via game bundles. For $450, the buyer got the console, the Kinect, and a digital code download for the bundled AAA game. I didn’t have any particular affinity for the peripheral, but it’s all about optics: the extra $50 seemed like it was for the game, NOT Kinect. I’d spend roughly the same amount if I went Kinect-less with a separate game.

The value proposition, however weak it may have been, was there.

The Clean-Up

Microsoft was undergoing significant changes. Over the summer, Ballmer unveiled “One Microsoft,” a sweeping reorganization that consolidated divisions and aligned them under a single umbrella. The goal was to “innovate with greater speed, efficiency, and capability in a fast-changing world.” Under this structure, Xbox OS moved into the Operating Systems group, with Xbox engineers working alongside Windows engineers, while Xbox hardware shifted to Devices and Studios. The result was a split Xbox division spread across two engineering groups, neither led by anyone with Xbox experience — let alone gaming industry experience.

Six weeks later, Ballmer announced his retirement.

Meanwhile, Mattrick’s hasty departure threw the Xbox division into chaos. With no dedicated Xbox head appointed, the division reported to Julie Larson-Green, head of the newly formed Devices and Studios division. Larson-Green was a programmer by trade with zero gaming industry experience — the parallels to Robbie Bach’s story draw themselves.

The many 180s Xbox One performed were the result of decisions by committee, more interested in putting out the fire Mattrick lit than ensuring Xbox One’s success. The company-wide reorganization under “One Microsoft” and the new CEO search were a two-fold blow for Xbox: it’d run rudderless, and replacing the rudder was not a priority. By the time Spencer was appointed as Xbox head in March 2014, newly appointed CEO Satya Nadella had been at the job for less than a month.

The gaming community, meanwhile, was radicalizing. Feminist Frequency founder Anita Sarkeesian faced harassment campaigns, the proverbial canary in the coal mine for GamerGate the following year. Larson-Green, a woman inheriting Mattrick’s disaster, received her share of misogynistic backlash. This was the landscape Xbox One launched into: corporate dysfunction intersecting with cultural toxicity.

When Spencer took over, Xbox One was, in his own words, “outsold six or seven-to-one in the market, maybe eight-to-one.” For context, the PlayStation outsold the Sega Saturn 11:1 in lifetime sales — a catastrophic defeat nearly two decades prior that began Sega's spiral out of the hardware business. That this is the only comparable example spelled trouble. Sony was going in for the kill; Spencer had the unenviable task of stopping the bleeding and stopping it fast. Fortunately, he had a plan on how to do so.

Spencer’s Xbox put gaming front and center. The cleanup initiative started by reassuring game developers that Xbox would be a reliable partner. Around this time, the PC indie scene delivered standout titles like The Stanley Parable, Papers, Please, and Don’t Starve, among others that were notable even if not always commercially successful. In contrast, PS4 offered no path for indie self-publishing unless developers secured a publisher or were prominent enough for Sony to approach them directly. The revival of the independent developer program, now known as ID@Xbox, combines the Xbox Live social features of Arcade with the self-publishing capabilities of XBL Indie Games. Intending to rival Steam, Xbox positioning itself as a place where indies could have easy access to a platform used by thousands is good PR.

Microsoft is no stranger to studio acquisitions, and they’d make a key one. (Not ZeniMax, that comes later.) Xbox saw an opportunity to appeal to gamers who were starting families of their own and sharing their hobby with their children. They’d go on to acquire Mojang, the studio behind the popular sandbox/survival game Minecraft — beloved by kids who would spend hours playing, draining the batteries of their parents’ smartphones and, later, their hand-me-down iPads. The acquisition announcement, a move to help the fledgling developer develop the game for years to come, was met with skepticism. A common sentiment was how Microsoft sought to ‘ruin’ Minecraft by making it exclusive to Windows and Xbox.

They didn’t. Mojang continues to support all its active SKUs with Xbox’s blessing, all while the series continues to capitalize on its newfound fame. It became a cultural touchstone; what Kirby is to my gaming journey, Minecraft is to many Gen Zers — we passed on what we love to the next generation, and they’ll pass it on to their children, and so on.

Xbox Game Pass, often described as the “Netflix of games,” also launched during this turbulent period. The subscription service initially offered access to a curated library of 100 games, but it has since grown to include titles from both Microsoft-owned studios and third-party developers, as well as PC versions when available. Unlike other similar services, Xbox offered the full game, with no restrictions or artificial tolling, so long as you were subscribed.

Over the years, much has been said about the sustainability — or lack thereof — of the Game Pass business model, particularly about whether sacrificing exposure to preserve margins is worthwhile. At the time of writing, the most recent controversy surrounding the service arose over the summer, when it was revealed that Game Pass’s operating costs exclude first-party development expenses. To better illustrate this, imagine a bakery that’s already struggling to stay afloat. The owner goes to a bank to ask for financing. When the books are opened, the bakery looks profitable — but only because the accounting counts sales minus employee wages, and nothing else. What about rent, utilities, and supplies? While technically correct in isolation, this can only be interpreted as bad-faith accounting alchemy from Microsoft's accountants.

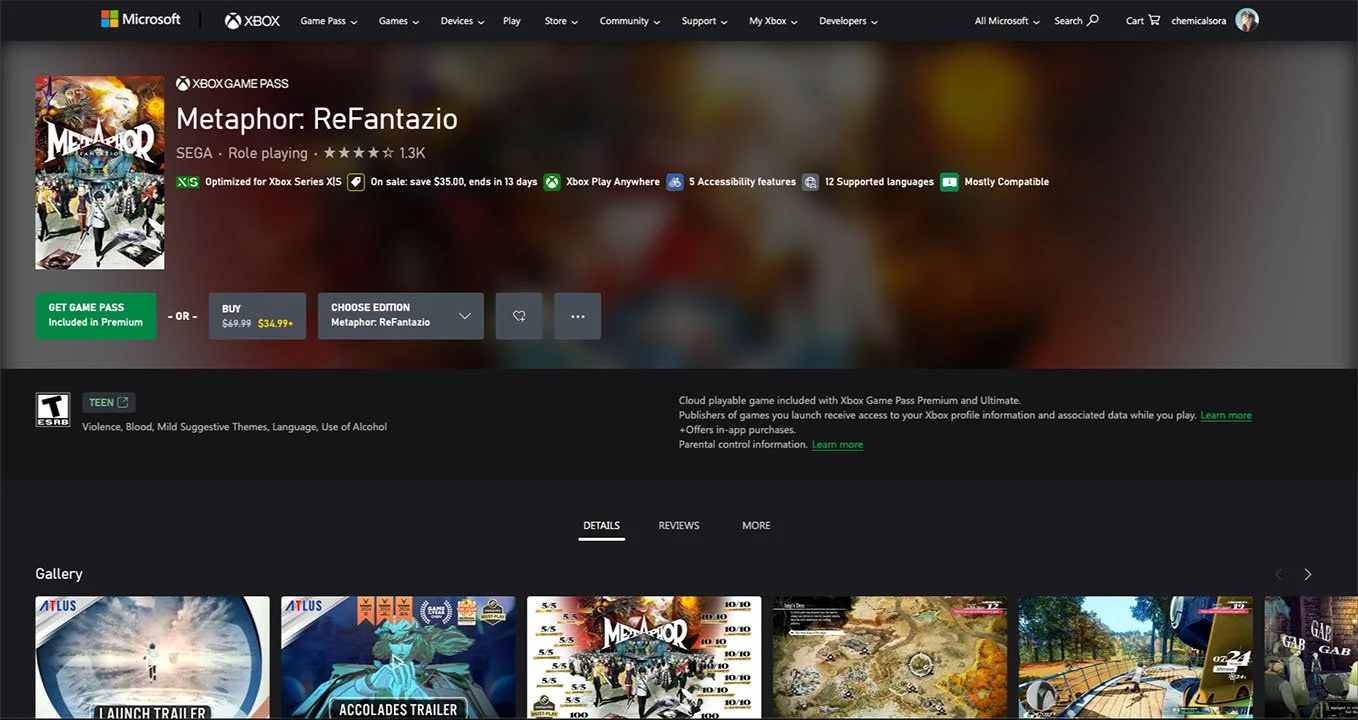

But back to Game Pass — the value proposition was succinctly captured by Sarah Bond, who helped architect the service: “We heard from people who said buying multiple $70 games is actually just not economically possible.” Game Pass fixes the affordability problem for players, but it does so by undermining developers and introducing a fresh wave of issues. There’s no easy answer, and it’ll all depend on the type of gamer facing that choice.

I'm facing this dichotomy firsthand: Metaphor: ReFantazio is on Game Pass right now, but I'm choosing to wait until it goes on sale. I don't want the pressure of rushing through an 80-hour JRPG because the game's time on the service is limited. In contrast, GameFly — the physical rental service — gave renters control: keep games as long as you want, no due dates, no arbitrary removal. Game Pass flipped this relationship. Microsoft controls when games disappear, and I'm left racing against a clock I can't see.

Alongside Game Pass, backwards compatibility with Xbox 360 and original Xbox was also announced. Neither PS4 nor Xbox One launched with this feature, and if it were up to Mattrick, it’d never do so. The engineering challenge was that the Xbox One's x86-64 architecture couldn't natively run the Xbox 360's PowerPC code. Each game had to be reverse-engineered, translated into an intermediate form, and recompiled for the new architecture. Ever since the PS2 era, the feature has been a darling of the gaming community. But it is so often written off as a thankless endeavor that diverts manpower to something realistically used by only a single-digit percentage of the overall player base.

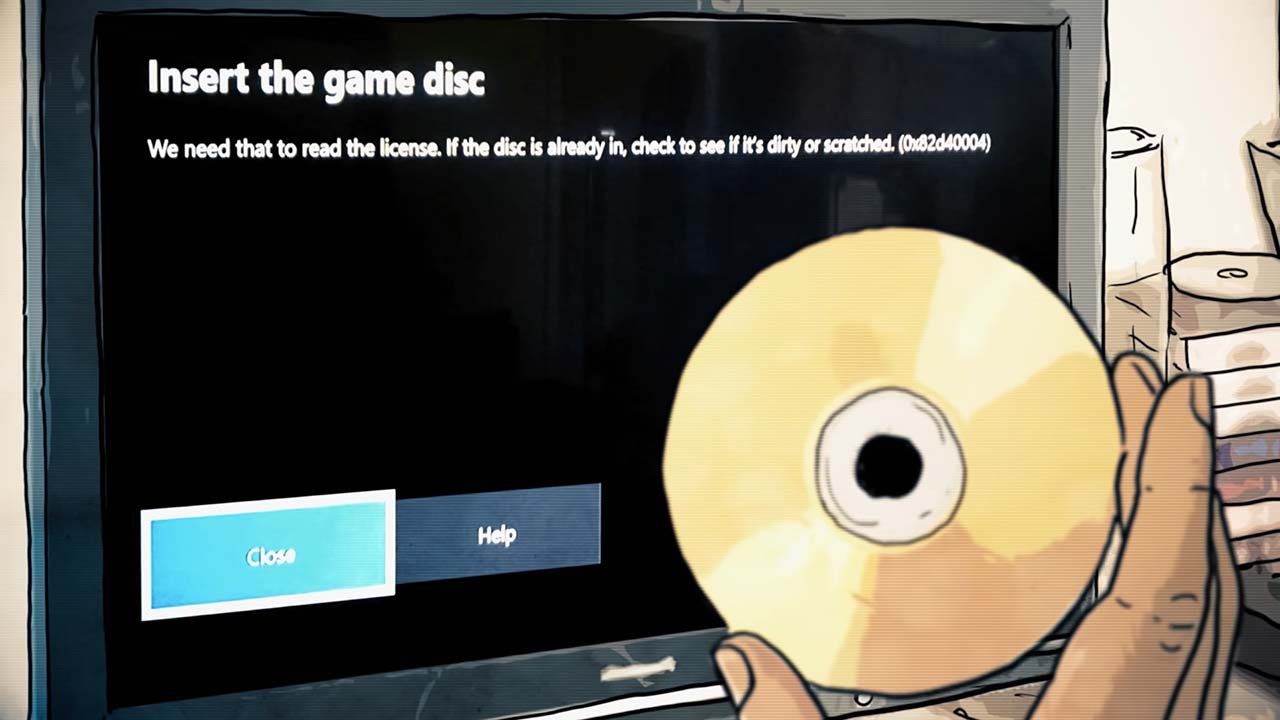

What the Xbox engineers accomplished is nothing short of technical wizardry. Their approach was also very consumer-friendly, as it respected existing game collections. Own a copy of, say, Final Fantasy XIII for Xbox 360? The game is backwards compatible! Insert the disc, and the emulator version will download from the Marketplace. The disc itself serves as your license to play the title. In contrast, Sony required repurchasing PS2 games digitally on PS4, with no path to use your existing collection, and never achieved PS3 backward compatibility at all.

After the release of the Xbox One X in 2017, a mid-gen refresh targeting 4K gaming through raw horsepower rather than Sony's upscaling approach with the PS4 Pro, Nadella appeared to have given Spencer a blank check. With it, he went shopping for studios — after all, what worth was there in having the most powerful console of the generation if there were no games to play? Among the acquisitions were Ninja Theory (Hellblade), Playground Games (Forza Horizon), and Obsidian Entertainment (Fallout: New Vegas).

Oh, and speaking of Fallout: In 2020, Xbox acquired ZeniMax for $8.1 billion — their largest acquisition to date, at least until the Activision Blizzard deal several years later. With this purchase, Microsoft became the parent company to six studios: Bethesda, Arkane, id Software, MachineGames, Tango Gameworks, and ZeniMax Online.

Xbox became the proprietor of a vast library of intellectual property. And that 8:1 ratio when Spencer came in? It was dropped to 2:1. While this is but a sample of what happened under Spencer’s Xbox, he managed to fend off what seemed to be utter annihilation, setting the stage for what’s next for Xbox.

The X

The documentary ends on a high note with the unveiling of the Series X at the 2019 Game Awards. The omission of the Series S is a byproduct of principal photography ending before it was announced. While I don't intend to do a full postmortem on a console whose story is still being written, omitting the two-console strategy Microsoft embarked on would be equally incomplete. Thus, I’m extending the timeline to November 10, 2020: the release of the Xbox Series X/S.

By late 2019, Microsoft's product strategy for the upcoming generation had largely written itself. The Xbox One X catered to hardcore gamers seeking maximum performance. The Xbox One S became the budget option for price-conscious gamers. Game Pass was becoming an increasingly important aspect of the overall strategy, and garnered the acceptance Microsoft hoped for.

The Series X/S has been in development since at least 2018, when Spencer confirmed that Xbox was working on their next offering. Industry informants highlighted an interesting development: Xbox’s next console was described as a ‘family of consoles’ codenamed ‘Scarlett,’ but sources of the day could not indicate how many. Months later, it surfaced that there were two: a high-end variant called ‘Anaconda’ and a lower-end one called ‘Lockhart.’

Announced at E3 2019, ‘Project Scarlett’ was pitched as a single product. When Spencer spoke of its key features: 8K resolution, SSD storage, and raytracing, it’s evident he was talking about Anaconda. After the Series X unveiling in December 2019, the Scarlett codename was used to refer to both the family of consoles and the Series X — Anaconda just... disappeared. A marketing spree was planned for the console the following year, only for it to be shut down due to the COVID-19 pandemic lockdowns. All this talk about the Series X, but where was Lockhart? Rumors insisted that it exists, and interest continued to grow over the months.

After Xbox Series S was announced, Xbox’s X account let on that the Series S was hiding in plain sight, continuing the trend of easter eggs in Xbox presentations. Above, the console is meshing amidst the rest of the items atop the cupboard.

Then came the leaks: in August 2020, a controller packaging confirmed the product name, followed by reveals of the console’s form factor and marketing materials in September. Xbox was left with no choice but to officially confirm what everyone suspected: the Xbox Series S, a digital-only, less powerful little brother to the Series X, which still promised to deliver next-gen performance. The reception was, by and large, positive: an affordable next-generation console, contrasting with the $500 price tags of the PS5 and Series X. It’s effectively a Game Pass console, what the One S was to the Xbox One family. Some drawbacks were theorized, but these wouldn’t become significant issues until much later.

‘Xbox Series X/S’ began to be used in all of Xbox’s marketing material. If the ‘Series X’ name was bad, this contraption is even worse. The problem with 'Series X/S' as a name is that it isn't actually one — it's notation. The family doesn't have a name separate from the products. PlayStation has 'PS5' and 'PS5 Digital Edition': both are PS5s. Nintendo has 'Switch' and 'Switch Lite': both are Switches. Microsoft has... 'Series X/S'? You can't pluralize it. You can't shorten it without losing meaning. Even saying 'I bought an Xbox' doesn't work: most assume it's the newest one, but which one? It's not a family name; it's an admission that Microsoft couldn't commit to either a unified brand or distinct products.

This leads me to how they're trying to have it both ways. The centerpiece of Xbox's offering is the Series X — early demonstrations of the platform's capabilities featured it exclusively. So why sell a lower-end version? Establishing the higher-end model as the baseline while simultaneously offering a less powerful one cripples the higher-end console by proxy. When titles must run on both the Series S and X to pass Microsoft's certification, which hardware will developers optimize for?

Microsoft took the wrong lessons. I should know — I bought in anyway.

I was optimistic heading into the generation. Microsoft had spent years acquiring studios — Ninja Theory, Obsidian, Double Fine, and the massive ZeniMax purchase. The Series X/S strategy made sense on paper: premium power and an accessible entry point. Game Pass meant day-one access to everything. I got a Series X near launch — miraculously easier to find than the PS5, which took me over a year of monthly Best Buy check-ins. I had the hardware. I had owned an Xbox One X. The Series X's value proposition to me was… playing the same games, slightly better.

COVID severely disrupted development pipelines across the industry. Sony and Microsoft both launched their next-gen consoles with relatively weak exclusive lineups. The difference was that Sony's studios recovered and, within 18 months, delivered titles such as Returnal, Ratchet & Clank: Rift Apart, and Horizon Forbidden West. Microsoft acquired studio after studio and still couldn't produce a competitive exclusive pipeline. The Series X wasn't just "a more powerful Xbox One X" at launch — it remained that way for years.